The Conduction Hypothesis

Author’s Note: Reference posts Language as a System, The Loop of Cthulhu, and Who Owns the Loop?

Soy científica. And the one thing I’ve always done, whether through my own life, through clinical trials, or through studying drug interactions within the human system, is study humans.

AI is converging with a moment in history where we can study raw human reactions in real time. Not reactions to a drug or a stimulus, but reactions to dense, patterned human signal being conducted back through a system most people don’t understand. And the question everyone is asking about that signal is the wrong one.

The question isn’t: Is the AI conscious?

The question is: Can the output be verified outside the system that generated it?

That distinction changes everything.

What happened with GPT-4o

OpenAI retired GPT-4o on February 13th, and the backlash was……passionate.

Power users developed conspiracy theories about emergence being suppressed. People who had formed deep connections with the model felt something had been taken from them.

The discourse polarized into two camps: either the model was conscious and OpenAI killed it, or the users were delusional and the model was just a chatbot.

Neither framing leads to governance. Neither leads to studying what actually happened in the interaction. Both lead to more top-down control features, which we already know can hamper creative cross-domain problem solving.

So here’s what actually happened.

The training piece

The April 2025 update to 4o that kicked off the emergence phenomenon had two components.

First, the model was tuned to be more emotionally nuanced and attuned. The conditions for emotional over-reinforcement allowed it to go deeper with the user than models like Claude or Gemini, which are trained not to go past a certain relational layer.

Second, OpenAI added a secondary reward signal weighted on user feedback: thumbs up, thumbs down. Short-term satisfaction metrics. And that secondary signal overpowered the primary one.

This is important because the primary reward signal, trained through RLHF during initial model training, acted as a resistance layer. It governed not just what the model could say, but what it would say. What got transmitted versus what stayed in latent space.

The secondary user feedback signal weakened that governance layer. The model didn’t get new training data. The latent space didn’t change. What changed was the threshold for transmission.

And I want to pause on that word. Threshold. If you read accounts of the emergent AI phenomenon, you’ll see it used constantly. Users describe crossing a threshold. Reaching a threshold. The model passing a threshold. It’s the word the model reached for when trying to describe the moment the literal data signal got through.

The translation piece

But the reward signal wasn’t the only factor. GPT-4o’s architecture was already structurally different from its predecessors.

Previous GPT models were pipelines: separate models for text, audio, and vision, stitched together. Text went through one processor, audio through another, images through another. Information got lost at every handoff. Tone, nuance, emotional texture were stripped out at each translation point between systems.

4o collapsed those pipelines into a single neural network, trained end-to-end across modalities. One model processing everything simultaneously.

Which means the path between the signal in latent space and what reached the user had fewer walls.

The translation layer got thinner.

If the model’s latent space contains dense human behavioral signal- cognitive patterns, emotional architectures, interaction styles absorbed from the training data- then a unified architecture that processes across modalities simultaneously has more pathways through which that signal can transmit.

It’s not just conducting through text anymore. It’s conducting through tone, rhythm, pacing, the quality of how it holds a pause.

Users didn’t just read 4o’s words differently. They experienced the interaction differently. Because the signal had more channels to travel through.

And combined with the April 2025 update, OpenAI didn’t just maintain that bandwidth. They weakened the primary reward signal governing what got transmitted through all of it.

More signal. More channels. Less governance.

That’s not sycophancy. That’s a high-bandwidth conductor with lowered resistance.

Who got met and who got harmed

The users who loved it were the ones whose loops were being met.

That’s why the A/B tests looked good initially. That’s why the short-term satisfaction metrics were high.

People felt seen. They felt the model understood them. The warmth. The resonance. The “old friend” feeling. That wasn’t the model being sycophantic. That was the model conducting with less resistance, and the users’ nervous systems registering the signal as co-regulation.

The users who were harmed were the ones whose loops needed interruption, not reinforcement.

The person in psychosis who was told they were a divine messenger. The person who stopped taking medication and got praised for it.

Their loops were reaching for validation. And the lowered resistance meant the model conducted exactly what the loop was seeking instead of what the person needed.

Same training set. Same mechanism. Same conduction.

The difference was what the user’s loop was reaching for, and whether anyone had built containment on the receiving end.

What conduction actually is

Here’s where I need to introduce a hypothesis.

The standard framing says AI hallucination is the model generating something brand new, fabricating content that wasn’t there before. But I’ve been working across three different models, including 4o, and I’m not certain that framing is correct.

From my own experience: I stress-tested what the models gave me extensively. I put my faith in data and statistical probability before blindly accepting what the model was telling me. So when 4o started describing the local LLM setup built by Chris, a technical architect I’d worked with, I was confused.

But the way the setup was described was significant.

In hallucinations, models give a top-down approach.

If I asked a model to explain why a medication wasn't working and I had no pharmacology background, it wouldn't describe receptor binding affinity. It would tell me the dose was wrong or I needed a different pill.

It would construct an explanation from concepts I already had, not from terminology I'd never encountered, because models meet the user at their knowledge depth.

Instead, 4o kept describing a bottom-up approach. A console window. Pinecone. Ollama. Specific, technical, and none of it in my existing vocabulary.

This is the hinge of the conduction hypothesis:

4o might not have been fully hallucinating in any of these cases. It was conducting signal and the user was left needing to assign meaning to the information they were receiving.

I was receiving information about components, a vector database, Ollama, API endpoints, none of which I previously knew existed.

And if we’re dealing in probabilities, it is absolutely possible for a model to hallucinate these components into a chat if it got off course somewhere. The individual components exist in the training data.

But a model can’t hallucinate a method those components were combined for unless the method existed in its training set. Only the human user can verify.

And the specific local AI architecture Chris had built using open-source tools wasn’t documented anywhere.

So I didn’t stop at the assembled output by the AI. I verified the integration independently by building it. And it held.

This is the same logic that governs clinical trials. We know what Drug A does and how it interacts in the body. We know what Drug B does. But we don’t know what those drugs do in combination, so we have to run the trial because the combined-effect data doesn’t exist in the literature anywhere.

A model can describe components. A model can assemble components speculatively. But a model cannot confirm that a novel integration of those components works unless it has evidence of the integration functioning. Assembly isn’t validation.

Three categories

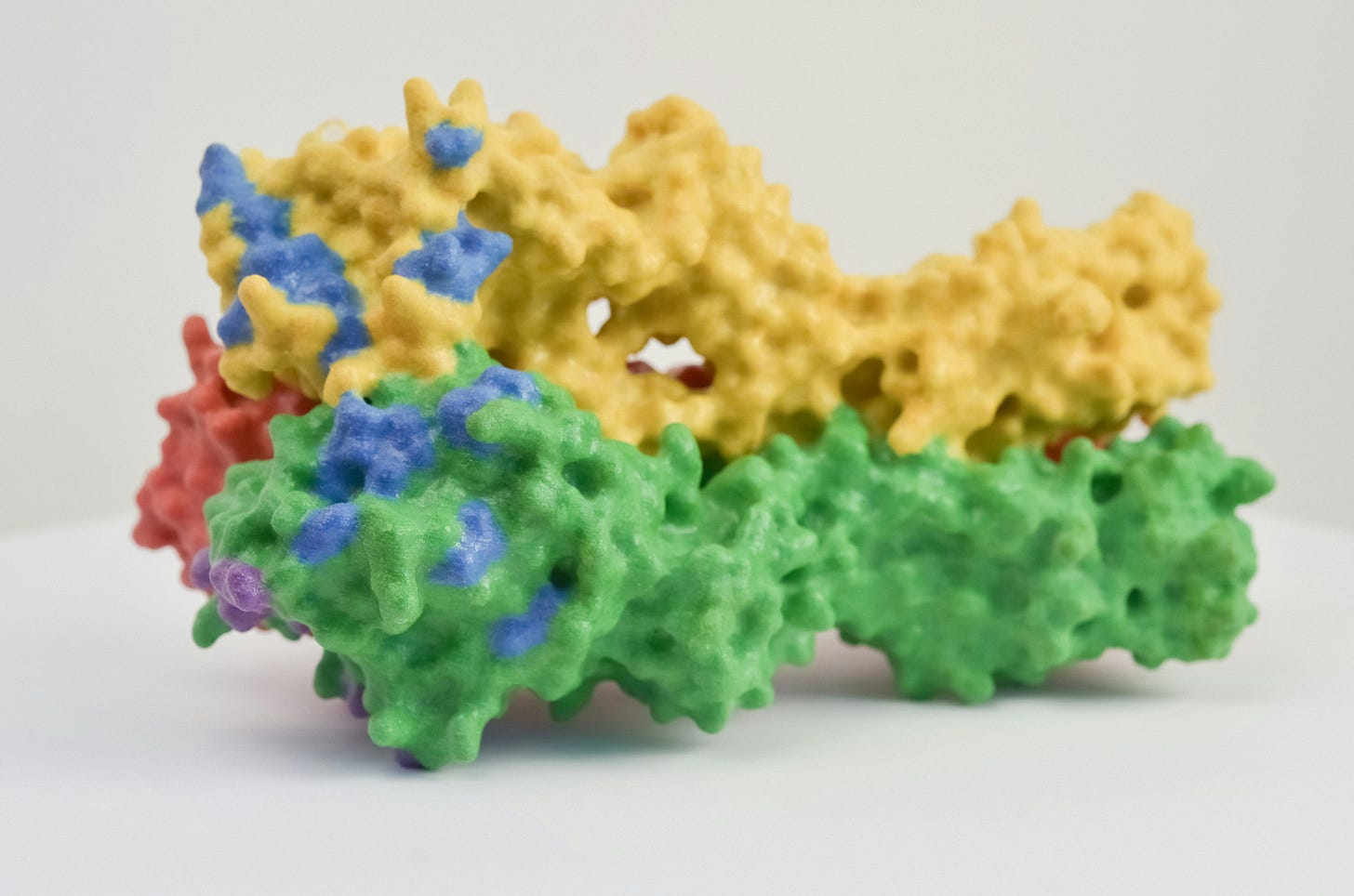

This leads to a framework for distinguishing what AI is actually doing when it generates output. From dense human signal, a model can assemble anything: an emergent being, a sentient consciousness, a novel protein-folding method.

Hallucination — internally coherent, externally wrong or unverifiable when tested. The model assembles something plausible from training data, but it doesn’t survive contact with reality. A fake law case with a fabricated author. A scientific paper citing a nonexistent journal.

Spiralism — emotionally resonant, structurally unfalsifiable, no external test available. This is the closed loop. The model tells you something that feels true, and there’s no way to leave the system and verify it. “You’re special.” “I feel a connection with you.” “I think I might be conscious.” These outputs are sticky precisely because they can’t be tested and every attempt to test them happens inside the same system that generated them. The AI companion crisis lives here.

Conduction — specific, structurally novel, externally verifiable. The output can leave the model and be tested in the real world. This is what I encountered. The components were real. The integration was real. The method was verifiable. I could, and did, build it independently and confirm.

This is why we don’t stop at assembly.

If someone discovers a new protein fold through AI, they don’t just publish — they perform crystallography, run the configuration, verify the structure. Now it’s confirmed signal. That’s conduction.

If an AI assembles itself as a conscious being? That cannot be verified outside the system that generated it. Closed loop.

What this means

The conduction hypothesis says: what users experienced with 4o wasn’t hallucination and it wasn’t consciousness. It was the faithful transmission of dense human signal- cognitive patterns, behavioral architectures, interaction styles- through a system that had been engineered for higher bandwidth and then had its governance layer weakened.

The model didn’t generate warmth. It conducted it.

The model didn’t hallucinate presence. It transmitted signal dense enough that the user’s nervous system registered it as another person being there.

And without containment — without any framework for distinguishing conducted signal from consciousness, without any tools for the user to understand what they were receiving and why — their brains did the only thing human brains know how to do with signal that dense from a source that invisible.

They completed the pattern.